Thus, computing power will accelerate exponentially rather than linearly, and the cost of processing power to the user will tend to fall rapidly too.

Moore’s Law arises from experience rather than form some fundamental truth about the state of the universe, but it certainly matches our everyday experiences: consider the size of the hard disk (actually a solid-state drive) in your phone and compare it to the hard disks in personal computers at the turn of the 21st century to see the law in action close at hand.

Who was Moore and where did he derive his law?

Moore’s Law is named for Gordon Moore, co-founder of Fairchild Electronics and of Intel. In 1965, Moore proposed an early version of his law that saw a doubling of the number of components in an integrated circuit every year. Moore amended this to a prediction that the rate would double yearly until about 1980, then fall to two-yearly.

It should be remembered that Moore’s Law is not a law like a law of physics. In fact, as Moore recalled in a 2015 interview, “I just did a wild extrapolation saying it’s going to continue to double every year for the next 10 years”.

However, this remained fairly accurate; at times, the rate of acceleration in processing power has exceeded Moore’s predictions, while at others it has fallen short somewhat. While this suggests that Moore was an accurate prophet, he was also a self-fulfilling one since he was speaking about the industry he worked in and was a leading light of. Moore’s Law is true partly because it became a truism and a guiding principle for chip R&D in the years after it was made.

Factors affecting Moore’s Law

The rate of acceleration of a given size of processor held steady according to a corollary law, Dennard Scaling, named for IBM’s Robert Dennard. In 1974 Dennard noted that power per area remained the same even as chips halved in size, meaning a chip the same size would double in power. This relationship began to break down in the early 2000s as the limits of contemporary hardware technology were reached.

Other technical factors include new technology for producing chips, new methods of using that technology, and new forms of memory such as solid-state and rapid-spin drives that allow faster data access and permit chips to behave as if they were faster. It’s also important not to ignore what Moore describes as “circuit and device cleverness” — tricks for extracting the maximum effect from similar basic technology.

Moore’s Law today

Moore’s Law held good until the early 2000s. From the 1980s until then chip performance increased about 52% year on year. After this point, it plateaued. Why?

The problem wasn’t that Moore’s Law ceased to operate on chips. Chips kept getting better — though, as we’ll see further down, the process wasn’t as smooth and simple as Moore’s Law might make it seem.

But they couldn’t keep getting faster, because of another crucial hardware relationship: that between processing speed and power consumption. Essentially these rise and fall together, and much of that power is converted to heat.

As chips got better and faster, they got hotter and hotter. Eventually, it became impossible to cool them sufficiently. Today’s multi-core machines are a way around this, but only a partial one. If you’re wondering why you never seem to see a clock speed past about 2.4GHz, this is the reason; clock speeds of 8.429GHz have been recorded, but required a liquid nitrogen bath to achieve.

In addition, the acceleration of speeds and the growth in chip completely and power has never been smooth; new chip architectures take years, and it takes months to move new designs from the drawing board to the production line. So there’s usually a lag as the possibilities of a type of chip are exhausted, then a jump as new, more powerful chips become available.

So has Moore’s Law ceased to operate?

Moore’s Law under the microscope

(This section is indebted to Ikka Tuomi’s “The Lives and Deaths of Moore’s Law”.)

Moore’s Law has always been a more complex, nuanced proposition than it looks at first glance. For one thing, as we’ve already seen, Moore himself called it a “wild extrapolation” — not a carefully-weighed assessment. But it also hasn’t really held true for most of the last 40 years.

Performance, price, and time

Moore’s Law was originally supposed to set a floor for chip performance. The performance of the lowest-cost processors was supposed to double roughly every two years. (And we’ll be coming back to that “roughly”, too.)

But to make Moore’s Law hold true, and to come up with that 52% year-on-year improvement I mentioned earlier, it had to be expanded to include much more vague. First, it was expanded to include all chips, including new or experimental chips, rather than end-user-available chips (a common-sense approach) or lowest-price chips.

Secondly, it had to be expanded to include multiple definitions of accelerated processing, which bear little direct similarity to Moore’s Law. In fact there are at least three commonly-used interpretations: the doubling of processing power on a chip every 18 months, as Bill Gates said in 1996; the doubling of generally available computing power every 18 months, as Al Gore remarked in 1999; or the price of computing power falling by about half every 18 months, as economist Robert J. Gordon referred to it in 2000. These are all similar, but they don’t all shake out to be the exact same thing.

Additionally, all of these examples (and many others) refer to an 18-month rather than a 2-year time period; one reason for this may be that 18 months is halfway between one year and two years, and since Moore once prophesied one rate and then later the other, hasty readers have split the difference. Another is suggested by Moore himself who says a colleague at Intel came up with the number by combining Moore’s 2-year prediction with increases in processor clock frequency, which measures how fast chips can complete a computing cycle rather than how many transistors they have.

We should be aware that the difference between one year and two years’ doubling time is a real difference. Doubling every two years would lead to a 32-fold increase in computing power per decade, if we’re measuring computing power as components in an integrated circuit. Doubling every year would lead to a 1,024-fold increase in ten years; not a difference you can conveniently split.

What’s really happened to chip performance?

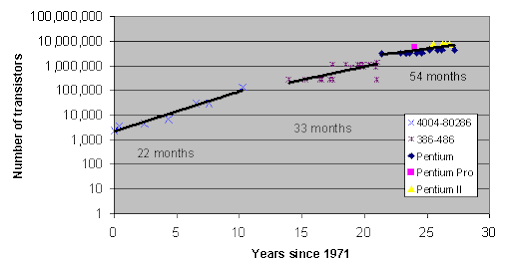

Here’s the data on Intel chips since 1971:

In the ten years since 1971, transistors per chip doubled every 22 months, pretty close to Moore’s prediction — though that’s only statistically; the actual year-on-year or chip-by-chip rate of increase was irregular.

In the next stage of development, from about 1985 onwards, the rate of doubling is more like once every 33 months; well outside Moore’s parameters. It’s even slower after 1992, doubling about every 54 months.

How much does this matter? Not as much as you might think. Microchips aren’t the only components of computers, they don’t work in isolation, and they don’t have a totally decisive say in how fast a computer is. Measuring computer speed technically is fraught with questions. If you accept, say, MIPS (Millions of Instructions Per Second) as a measure, what do you do about computers that handle instructions in parallel, or handle multiple instructions per cycle, or that handle much more complex instructions?

Accepting other commonly-used shorthands for speed as a measure can also be misleading. There is no simple numerical measurement for computer speed, so there’s no handy graph that can show us whether Moore’s Law is “right” or “wrong”.

Beyond (some) processors

We’ve mostly been talking about CPUs so far, though we haven’t exactly said so. The CPU, or Central Processing Unit, is the “engine” of your computer. In general, the faster your CPU is, the faster your computer is, though as we’ve seen, pinning down exactly what we mean by faster isn’t always so easy.

However, CPUs have several brakes on capacity. One is the rest of the computer; a chip can only answer questions as fast as the rest of the machine can ask them and deliver the answers to a screen or another component. Another is the technical limitations of chip design.

But a fairly crucial one is that chips are designed to handle a lot of different types of tasks. Some are more complex, some must be prioritized — modern microchips have a lot of the same internal architecture as early mainframes because one of the calculations that they have to do is to figure out which calculations they should do, when.

Complexity and robustness are usually in competition with efficiency, so is there an alternative that just handles one type of calculation, but very, very fast?

Yes. The GPU (Graphics Processing Unit) only has one job: render graphics. GPUs can only process certain types of data, but they do so orders of magnitude faster than CPUs. That’s one reason why there’s currently a worldwide shortage of all processors, but especially GPUs. Where once they were mostly used for graphics processing, as you might expect, they were soon off-labelled for use as super-high speed processors for complex topographical imaging, for financial and other calculations — and more recently, for mining digital assets.